Increasing KVM Guest Hard Disk (Hard Drive) Space

Increasing the hard drive space in a KVM guest can be rather tricky. The first step is to shutdown (completely turn off) the guest machine by running the below command from the guest system:

sudo shutdown -h now

Once the guest machine has been turned off (verify it is off by using sudo virt-manager on the host machine to see if it's no longer running), on the host machine, resize the LVM partition by running the following command (and adjust the size as necessary):

sudo lvextend -L+78G /dev/vg_vps/utils

If you need help identifying the name of the disk your guest has been assigned, run this command from the host:

sudo virsh domblklist {VIRSH_NAME_OF_VIRTUAL_MACHINE}

For my example, I would use this command:

sudo virsh domblklist utils

From the host machine, download the GParted live ISO image for your system's architecture (x86 or x64). Start virt-manager:

sudo virt-manager

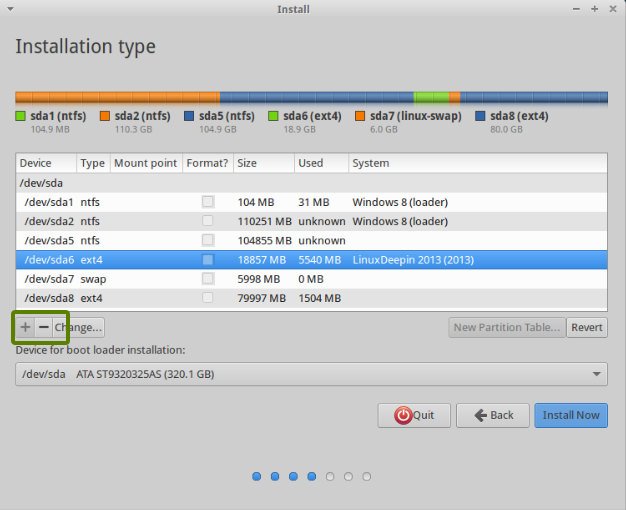

Assign a CD drive to the virtual machine you're expanding the hard drive space for, and assign / mount the GParted ISO to it. Change the boot order so that the KVM guest boots from the CD first. Save your settings and start the KVM guest virtual machine. Boot into GParted Live. GParted will run automatically. Use GParted to expand the partitions so that they make use of the added storage based on your own preferences. Apply the resize operation. Exit GParted and shutdown the virtual machine so that it's off again. Remove the CD drive from the boot options from virt-manager, and then start the KVM guest again.

If Guest Doesn't Use LVM Partitioning

If your KVM guest virtual machine hasn't been configured to use LVM, the added hard drive space should already be available to your system. Verify it has been expanded by again running the df -h command. You're done!

If Guest Uses LVM

Let the OS boot. From the guest, the file system needs to be resized itself. You can do this by running the following command to see the current space allocated to your system's partitions:

df -h

You'll see a bunch of output similar to:

Filesystem Size Used Avail Use% Mounted on

udev 2.9G 0 2.9G 0% /dev

tmpfs 597M 8.3M 589M 2% /run

/dev/mapper/utils--vg-root 127G 24G 98G 20% /

tmpfs 3.0G 0 3.0G 0% /dev/shm

tmpfs 5.0M 0 5.0M 0% /run/lock

tmpfs 3.0G 0 3.0G 0% /sys/fs/cgroup

/dev/vda1 720M 60M 624M 9% /boot

tmpfs 597M 0 597M 0% /run/user/1000

You'll notice that the added hard drive space doesn't show up on any of the partitions. However, it is available to be assigned to these partitions. To assign additional space, you will need to resize it using these commands (run from the guest virtual machine… the machine you're resizing):

lvextend /dev/mapper/utils--vg-root -L +78G

resize2fs /dev/mapper/utils--vg-root

Obviously, you need to substitute the name of the LVM partition with the one from your system shown in your output of the df -h command.

Resources

https://tldp.org/HOWTO/LVM-HOWTO/extendlv.html – Mirror if Offline

https://sandilands.info/sgordon/increasing-kvm-virtual-machine-disk-using-lvm-ext4 – Mirror if Offline